|

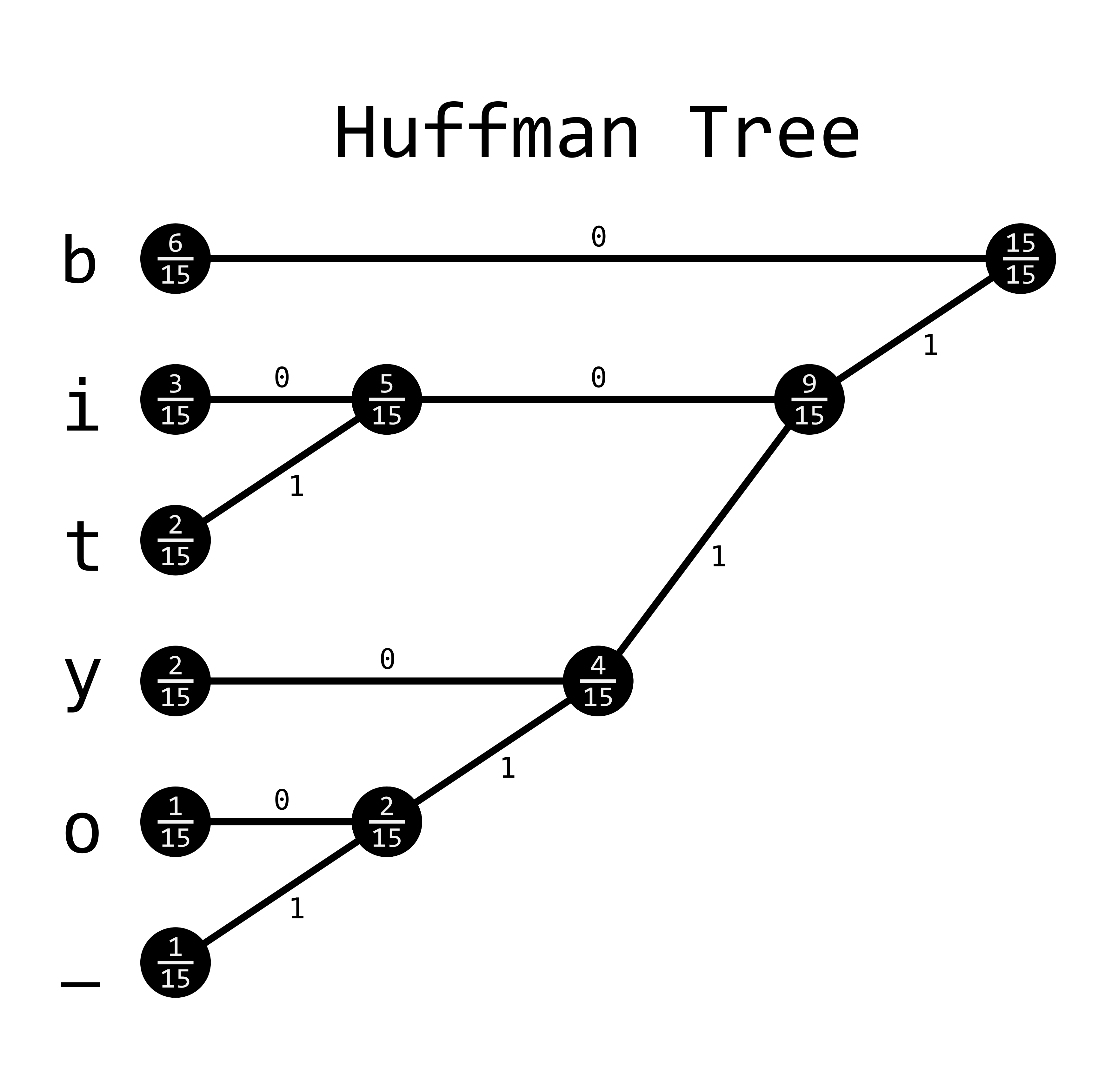

Note that we’re encoding full words here. So now we have a nice Huffman tree that provides binary codes for each full word in the vocabulary. Here’s what the tree looks like in tree form: Or you can just skip the excitement and the fun and click on “end” to skip to the final state of memory after this part of the process. Try to follow the code above and step through this to understand how we’re building the tree. You can either click “play” and watch the magic happen, or you can click “next” for each step through of each change in memory in the algorithm. The “grayer” columns (indices 9 to 18) are used for non-leaf nodes in the tree. Each white column in this table represents one of our 8 words. Step through the below animation for a walkthrough of how this works in our “wood chuck” example. The magic is in the source code: void CreateBinaryTree () part 1: tree construction word2vec’s CreateBinaryTree() I wrote this post to explain what I found. īefore reading about word2vec, I was familiar with Huffman coding as a means of lossless data compression, but I was confused about how exactly the tree is constructed, and then how it is used in word2vec’s “hierarchical softmax” method. For the “hierarchical softmax” method, a Huffman binary tree is used. One is the so called “hierarchical softmax” and the other is a process called “Noise Contrastive Estimation”. There are two major approaches to training the word2vec model. PriorityQueue q = new PriorityQueue(n, new ImplementComparator()) Print(' %-4r |%12s' % (char, huffmanCode))Ĭlass ImplementComparator implements Comparator Nodes = sorted(nodes, key=lambda x: x, reverse=True) # Main function implementing huffman codingĭef huffman_code_tree(node, left=True, binString=''):ĭ.update(huffman_code_tree(l, True, binString + '0'))ĭ.update(huffman_code_tree(r, False, binString + '1'))įreq = sorted(ems(), key=lambda x: x, reverse=True) For each non-leaf node, assign 0 to the left edge and 1 to the right edge.Īssign 0 to the left edge and 1 to the right edgeĭef _init_(self, left=None, right=None):.Repeat steps 3 to 5 for all the characters. Repeat steps 3 to 5 for all the characters.Remove these two minimum frequencies from Q and add the sum into the list of frequencies (* denote the internal nodes in the figure above).Set the value of the z as the sum of the above two minimum frequencies. Assign the minimum frequency to the left child of z and assign the second minimum frequency to the right child of z. Make each unique character as a leaf node.These are stored in a priority queue Q.Ĭharacters sorted according to the frequency Sort the characters in increasing order of the frequency.Calculate the frequency of each character in the string.Huffman coding is done with the help of the following steps.

The tree created above helps in maintaining the property. a code associated with a character should not be present in the prefix of any other code. Huffman Coding prevents any ambiguity in the decoding process using the concept of prefix code ie. Once the data is encoded, it has to be decoded. Huffman coding first creates a tree using the frequencies of the character and then generates code for each character. Using the Huffman Coding technique, we can compress the string to a smaller size. Thus, a total of 8 * 15 = 120 bits are required to send this string. There are a total of 15 characters in the above string. Initial stringĮach character occupies 8 bits. Suppose the string below is to be sent over a network. Huffman Coding is generally useful to compress the data in which there are frequently occurring characters. Huffman Coding is a technique of compressing data to reduce its size without losing any of the details. Decrease Key and Delete Node Operations on a Fibonacci Heap.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed